Modules

The project currently has two extension mechanisms, namely connectors and modules. This page describes what modules are and how they are leveraged by the control plane to build the data plane flow.

What are modules?

As described in the architecture page, the control plane generates a description of a data plane based on policies and application requirements. This is known as a blueprint, and includes components that are deployed by the control plane to fulfill different data-centric requirements. For example, a component that can mask data can be used to enforce a data masking policy, or a component that copies data may be used to create a local data copy to meet performance requirements, etc.

Modules are the way to describe such data plane components and make them available to the control plane. A module is packaged as a Helm chart that the control plane can install to a workload's data plane. To make a module available to the control plane it must be registered by applying a FybrikModule custom resource.

The functionality described by the module may be deployed (a) per workload, or (b) it may be composed of one or more components that run independent of the workload and its associated control plane. In the case of (a), the control plane handles the deployment of the functional component. In the case of (b) where the functionality of the module runs independently and handles requests from multiple workloads, a client module is what is deployed by the control plane. This client module passes parameters to the external component(s) and monitors the status and results of the requests to the external component(s).

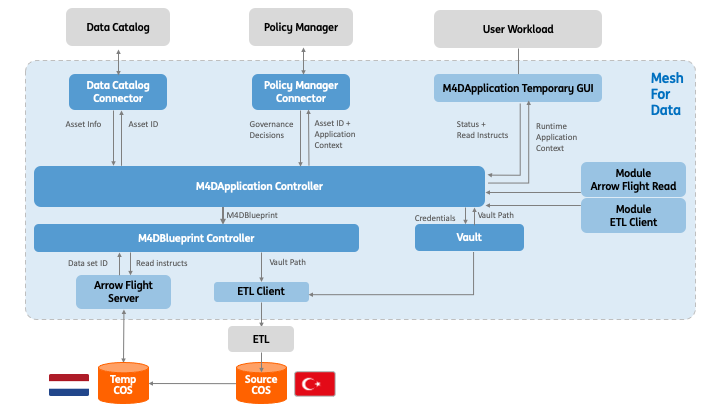

The following diagram shows an example with an Arrow Flight module that is fully deployed by the control plane and a second module where the client is deployed by the control plane but the ETL component providing the functionality has been independently deployed and supports multiple workloads.

Components that make up a module

There are several parts to a module:

- Optional external component(s): deployed and managed independently of Fybrik.

- Module Workload: the workload that runs once the Helm chart is installed by the control plane. Can be a client to the external component(s) or be independent.

- Module Helm Chart: the package containing the module workload that the control plane installs as part of a data plane.

- FybrikModule YAML: describes the functional capabilities, supported interfaces, and has links to the Module Helm chart.

Registering a module

To make the control plane aware of the module so that it can be included in appropriate workload data flows, the administrator must apply the FybrikModule YAML in the fybrik-system namespace. This makes the control plane aware of the existence of the module. Note that it does not check that the module's helm chart exists.

For example, the following registers the arrow-flight-module:

kubectl apply -f https://raw.githubusercontent.com/fybrik/arrow-flight-module/master/module.yaml -n fybrik-system

When is a module used?

There are three main data flows in which modules may be used: * Read - preparing data to be read and/or actually reading the data * Write - writing a new data set or appending data to an existing data set * Copy - for performing an implicit data copy on behalf of the application. The decision to do an implicit copy is made by the control plane, typically for performance or governance reasons.

A module may be used in one or more of these flows, as is indicated in the module's yaml file.

Control plane choice of modules

A user workload description FybrikApplicaton includes a list of the data sets required, the technologies that will be used to access them, the access type (e.g. read, copy), information about the location and reason for the use of the data. This information together with input from data and enterprise policies, determine which modules are chosen by the control plane and where they are deployed.

Available modules

The table below lists the currently available modules:

| Name | Description | FybrikModule | Prerequisite |

|---|---|---|---|

| arrow-flight-module | reading and writing datasets while performing data transformations | https://raw.githubusercontent.com/fybrik/arrow-flight-module/master/module.yaml | |

| airbyte-module | reading datasets from data sources supported by the Airbyte tool | https://raw.githubusercontent.com/fybrik/airbyte-module/main/module.yaml | |

| delete-module | deletes s3 objects | https://raw.githubusercontent.com/fybrik/delete-module/main/module.yaml | |

| implicit-copy | copies data between any two supported data stores, for example S3 and Kafka, and applies transformations. | https://raw.githubusercontent.com/fybrik/data-movement-operator/master/modules/implicit-copy-batch-module.yaml https://raw.githubusercontent.com/fybrik/data-movement-operator/master/modules/implicit-copy-stream-module.yaml |

- Datashim deployment. - FybrikStorageAccount resource deployed in the control plane namespace to hold the details of the storage which is used by the module for coping the data. |

Contributing

Read Module Development for details on the components that make up a module and how to create a module.